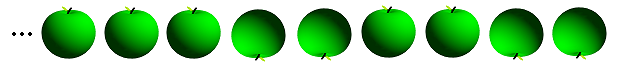

I’m going to start by describing a game that seems completely unrelated to sphere packing. The Conway-Hamming game involves a half-infinite row of green apples, each of which can either point up or down:

In any configuration, all but finitely many green apples (occasionally referred to as ‘grapples’) point upwards. The game proceeds as follows:

- Players alternate taking turns. If a player is incapable of moving, he/she loses.

- Each turn, a player flips the orientation of between 1 and 3 apples, where the leftmost of those apples must change from pointing down to up.

It’s clear that this process must eventually terminate. Specifically, we can represent each configuration by a non-negative integer, where each 1 in the binary representation corresponds to an apple pointing down. For instance, the configuration above is represented by 51.

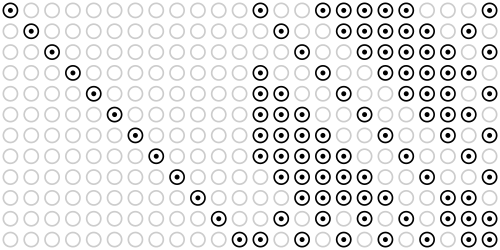

The sequence of losing positions is given by the sequence {0, 15, 51, 60, 85, 90, 102, 105, 150, 153, 165, 170, 195, 204, 240, 255, …} (A075928 in the OEIS). Specifically, we construct this sequence term-by-term, where each term in the sequence is the smallest natural number which differs from all of the previous terms by a Hamming distance of at least 4. (Hamming distance is the number of positions in which the binary representations of two integers differ.)

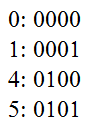

There’s actually a quick method of verifying whether a sequence of apples is a losing position. We write down the binary representations of the positions of each down-pointing apple. For instance, in the first diagram, we have apples in positions 0, 1, 4 and 5.

We then look at each of the columns. (I’ve shown four here, although there are actually infinitely many columns consisting entirely of ‘0’s extending to the left.) The fact that this is a losing position is equivalent to the following statement:

- In each column, there are an even number of ‘1’s and an even number of ‘0’s.

Error-correcting codes

The losing positions in the Conway-Hamming apple-flipping game correspond to ‘codewords’ in an error-correcting code known as the Hamming code. Suppose Alice sends Bob a codeword, namely a sequence of apples corresponding to a losing position:

Eve, who is notorious for eating forbidden fruits and attempting to disrupt private communication between Alice and Bob, decides to devour up to three of the apples whilst leaving the others untouched.

Amazingly, Bob can actually recover the original codeword from this partial information. If Eve eats four apples, however, some genuine information is lost. Inverting an apple is as bad as eating two apples (in a technical sense which I’ll elaborate upon later), so inverting two apples would also cause problems for Bob.

A variant of the game is where there are 24 apples (instead of infinitely many), and you’re allowed to flip up to seven apples (instead of merely three). The 4096 losing positions correspond to codewords in a very special code known as the binary Golay code. It is generated by the following generator matrix (over the finite field F_2):

000000000000000011111111 000000000000111100001111 000000000011001100110011 000000000101010101010101 000000001001011001101001 000000110000001101010110 000001010000010101100011 000010010000011000111010 000100010001000101111000 001000010001001000011101 010000010001010001001110 100000010001011100100100

Permuting the rows and columns of this matrix results in various equivalent codes. For instance, here is another equally valid matrix:

The left half is the identity matrix; the right half is the complement of the adjacency matrix of the icosahedron. The automorphism group of a binary Golay code (i.e. the permutations of the 24 bits that map codewords to codewords) is the Mathieu group M24. It’s also involved in a particular construction of the Leech lattice, which is in turn used to construct the famous Monster group.

The binary Golay code is even better than the Hamming codes, functioning even if Eve eats up to seven apples. Since it is so efficient, the Golay code was used by the Voyager probes to send photographic information back to Earth. That’s the only practical application of sporadic simple groups of which I know.

Checking and repairing codewords can be done through a pencil-and-paper algorithm known as the Miracle Octad Generator. This, confusingly, has the same acronym as the Mathematical Olympiad for Girls. I intend to write a cp4space post on these subjects later, although I’ll take this opportunity to segue onto the topic of UK mathematical olympiads and wish the best of luck to everyone who sat the BMO2 exam yesterday.

The  game

game

(Yes, I’ve been informed how to embed LaTeX directly into cp4space posts.)

Here’s a game I’ve designed, inspired by Conway’s apple-flipping game. The game position is a n-tuple of non-negative reals (where n is fixed for a particular game). For instance, a possible configuration in the game for n = 3 is (0, 28/5, π). These can be visualised as coordinates in Euclidean space.

On each turn, you’re allowed to move to a position as long as it’s a (Euclidean) distance of less than 1 away from the current position, and that the new position lexicographically precedes the previous one. In other words, (a, b, c) → (d, e, f) is a valid move if and only if both of these conditions hold:

, or

, or

.

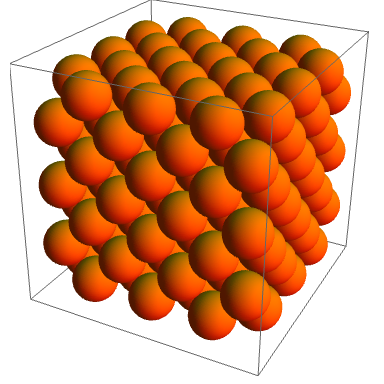

The losing positions for n = 3 are the centres of the spheres in the hexagonal close packing, which is similar to the arrangements of oranges favoured by greengrocers.

For n = 2, the basic hexagonal lattice gives the losing positions. As for n = 1, it should be obvious that the integer points are precisely the losing positions (if you’re not in a losing position, the winning strategy is to replace x with floor(x) on each of your turns until your opponent is left with 0).

Real lexicographical order isn’t well, so it is pretty obvious that there are games which don’t end. However, this is easy to prove that either player can force end of game: for n-tuple game one can decrease first number to 0, thus reducing problem to n-1 tuples. Maybe your winning possibility wasn’t reaching minimum, or maybe you thought about some infinite game prevention?

A winning position is defined as one where that player can force a win irrespective of the opponent’s strategy. Even though infinite games are possible, such as {(1,1,1), (1/2,1/2,1/2), (1/3,1/3,1/3), (1/4,1/4,1/4), …}, there is no incentive for either player to do so, and jumping to a non-lattice point gives the opponent a winning position.

Pingback: Analysing Escher | Complex Projective 4-Space

Superb website you have here but I was curious about

if you knew of any community forums that cover the same topics talked about in this article?

I’d really like to be a part of community where I can get responses from

other experienced individuals that share the same interest.

If you have any recommendations, please let me know.

Thanks a lot!

I think that the closest thing to what you describe is a mailing list that’s been active for about 30 years:

https://mailman.xmission.com/cgi-bin/mailman/listinfo/math-fun

Alternatively, there are always the comments at the foot of these pages.